-

For more information on how to avoid pop-up ads and still support SkiTalk click HERE.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

SKI mag survey results

Good humor.

The east rankings are also up:

https://www.skimag.com/ski-resort-life/best-in-the-east-overall-1-10-2018

https://www.skimag.com/ski-resort-life/best-in-the-east-overall-11-20-2018

https://www.skimag.com/ski-resort-life/best-in-the-east-overall-1-10-2018

https://www.skimag.com/ski-resort-life/best-in-the-east-overall-11-20-2018

- Smuggs

- Sugarbush

- Mount snow

- Tremblant

- Jay peak

Amusing — Snow at third.

- Joined

- Apr 24, 2017

- Posts

- 1,428

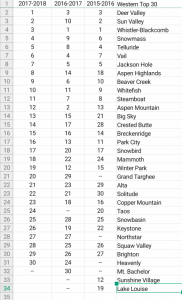

For the West I was surprised to see Sun Valley at #2 and Big Sky at #13. I was equally surprised to see Aspen Mt, Jackson and Steamboat that "low".

As for the East, I was surprised to see Smuggs at the top and Mt Snow at #3. Also surprised to see Mad River at #6 and Loon at #10. Also found it interesting for Stowe to be down at #8 and Whiteface down at #13.

IMO it reinforces the joke these Ski Magazine rankings have become (perhaps they were always a joke ). Now that multi-mountain passes are making some headway it seems like independents doled out the $$ to Ski Magazine. What does it tell you when the #1 resort in the East does not have a single high-speed chairlift or just about no après-ski options - not to mention that it feels like they have not invested a single $ into the place in three decades? Let me know when Smuggs hosts a Winter Olympics, or two.

). Now that multi-mountain passes are making some headway it seems like independents doled out the $$ to Ski Magazine. What does it tell you when the #1 resort in the East does not have a single high-speed chairlift or just about no après-ski options - not to mention that it feels like they have not invested a single $ into the place in three decades? Let me know when Smuggs hosts a Winter Olympics, or two.

As for the East, I was surprised to see Smuggs at the top and Mt Snow at #3. Also surprised to see Mad River at #6 and Loon at #10. Also found it interesting for Stowe to be down at #8 and Whiteface down at #13.

IMO it reinforces the joke these Ski Magazine rankings have become (perhaps they were always a joke

). Now that multi-mountain passes are making some headway it seems like independents doled out the $$ to Ski Magazine. What does it tell you when the #1 resort in the East does not have a single high-speed chairlift or just about no après-ski options - not to mention that it feels like they have not invested a single $ into the place in three decades? Let me know when Smuggs hosts a Winter Olympics, or two.

). Now that multi-mountain passes are making some headway it seems like independents doled out the $$ to Ski Magazine. What does it tell you when the #1 resort in the East does not have a single high-speed chairlift or just about no après-ski options - not to mention that it feels like they have not invested a single $ into the place in three decades? Let me know when Smuggs hosts a Winter Olympics, or two.

Last edited:

What criteria did they use to form these rankings?

Or is it based on which resort pays the most in advertising?

Or is it based on which resort pays the most in advertising?

Stowe came in at number eight?

Actually, other than the totally inadequate lifts and being hard to get to, Smuggs is a great mountain.

For the West I was surprised to see Sun Valley at #2 and Big Sky at #13. I was equally surprised to see Aspen Mt, Jackson and Steamboat that "low".

As for the East, I was surprised to see Smuggs at the top and Mt Snow at #3. Also surprised to see Mad River at #6 and Loon at #10. Also found it interesting for Stowe to be down at #8 and Whiteface down at #13.

IMO it reinforces the joke these Ski Magazine rankings have become (perhaps they were always a joke). Now that multi-mountain passes are making some headway it seems like independents doled out the $$ to Ski Magazine. What does it tell you when the #1 resort in the East does not have a single high-speed chairlift or just about no après-ski options - not to mention that it feels like they have not invested a single $ into the place in three decades? Let me know when Smuggs hosts a Winter Olympics, or two.

Maybe it means terrain is more important than the ancillary stuff. I've never been to Smuggler's, but it looks like the survey respondents that picked the resort value the skiing, not the après. Which is a good thing. Normally people on ski forums blow off the results because the resort has been rated on the quality of its pie and WiFi.

You have to remember, that these results are based on READER opinions, not the magazine's editors. (Unless some former SKI employee wants to tell us otherwise.). Now, the "quality" of SKI's readership over the years has certainly been questioned. Elsewhere in this forum it seemed that most of us receive the magazine for free and don't look at it. But now that anyone can go online and fill out the survey, you can't even assume the respondents are readers/subscribers. More likely the resorts have either more visitors or more FANS on their Facebook feed that they can whip into filling out the survey.

I actually find more interesting the patterns of placement over the years more than the relative rankings. Is a place consistently ranked the same or was there a wild swing? What caused that? A better PR person or more snow? Take a look at Aspen and Sun Valley. Isn't that interesting?

Last edited:

We

every year, so I'm repeating last year's comments which I saved from Epic. I responded as a subscriber 4x in the 90's, maybe early 00's. The then paper forms were for 6 areas and instructions were to rate all areas skied in the past 2 seasons. I made photocopies and usually reviewed 20-25 areas.

every year, so I'm repeating last year's comments which I saved from Epic. I responded as a subscriber 4x in the 90's, maybe early 00's. The then paper forms were for 6 areas and instructions were to rate all areas skied in the past 2 seasons. I made photocopies and usually reviewed 20-25 areas.

The overall ratings are flawed because each of SKI's 15 odd categories are rated equally and the non-ski related categories can outnumber the snow, terrain, lifts etc. This methodology will keep Alta/Snowbird etc. down in the 20 ranking range no matter who fills out the surveys. So no it's not Deer Valley's advertising budget; it's SKI Magazine cooking the categories to favor areas like Deer Valley.

Zrankings includes some non-ski related criteria too (notably access and ski town quality) but the overall formula (I don't know the weightings) seems to put the places noted for snow and tough terrain at or near the top.

The individual category lists make more sense. And the "overall satisfaction" list looks more like the Zrankings list than SKI's equal category weight overall list. These factors lead me to believe that the self-selected survey respondents are probably rather similar to the community of active posters here. It takes some time and effort to fill out the survey (especially for 20+ areas as I did), and mainly people who care a lot about skiing will do that. I don't think all that many of the casual, one-week-a-season destination skiers are responding.

Variation year to year is mostly due to who fills out the survey IMHO. If it's due to snow conditions, you probably won't be able to see a pattern by season except perhaps for extreme cases like perhaps the 4 consecutive bad years at Tahoe. The variation within season is usually much greater than the season-to-season variation, and it's fairly random whether most people get a lucky or unlucky week for their advance scheduled trips. And people don't even have the same definition of what constitutes lucky. Whistler always gets lousy survey scores for weather, but if you like seeing abundant coverage of steep terrain and lots of fresh snow, where do you think that comes from?

The overall ratings are flawed because each of SKI's 15 odd categories are rated equally and the non-ski related categories can outnumber the snow, terrain, lifts etc. This methodology will keep Alta/Snowbird etc. down in the 20 ranking range no matter who fills out the surveys. So no it's not Deer Valley's advertising budget; it's SKI Magazine cooking the categories to favor areas like Deer Valley.

Zrankings includes some non-ski related criteria too (notably access and ski town quality) but the overall formula (I don't know the weightings) seems to put the places noted for snow and tough terrain at or near the top.

The individual category lists make more sense. And the "overall satisfaction" list looks more like the Zrankings list than SKI's equal category weight overall list. These factors lead me to believe that the self-selected survey respondents are probably rather similar to the community of active posters here. It takes some time and effort to fill out the survey (especially for 20+ areas as I did), and mainly people who care a lot about skiing will do that. I don't think all that many of the casual, one-week-a-season destination skiers are responding.

Variation year to year is mostly due to who fills out the survey IMHO. If it's due to snow conditions, you probably won't be able to see a pattern by season except perhaps for extreme cases like perhaps the 4 consecutive bad years at Tahoe. The variation within season is usually much greater than the season-to-season variation, and it's fairly random whether most people get a lucky or unlucky week for their advance scheduled trips. And people don't even have the same definition of what constitutes lucky. Whistler always gets lousy survey scores for weather, but if you like seeing abundant coverage of steep terrain and lots of fresh snow, where do you think that comes from?

Last edited:

No they are not, they are an equal weighting of the individual categories, and this has been a known fact for well over a decade on these threads. Thus mySo, @TonyC , you're saying that the "overall" results are not the aggregation of the Overall Satisfaction questions?

With at least half the categories being non skiing related, the SKI Magazine rankings will always inspire a knee jerk reaction of derision among the avid ski community. When I was working on that project two years ago, I rounded up several old SKI Magazine surveys and used them as a guideline for some of the non ski related categories. However, I'm in philosophical agreement with Christ Steiner of Zrankings. If we can construct an objective measure for a category, we should try to do that.

The as yet undeveloped project had the right idea for overall rankings. Let the end user choose the importance weightings.

- Joined

- Apr 24, 2017

- Posts

- 1,428

EAST

1 - Smugglers Notch

2 - Sugarbush

3 - Mount Snow

4 - Tremblant

5 - Jay Peak

6 - Mad River Glen

7 - Sugarloaf

8 - Stowe

9 - Okemo

10 - Loon

11 - Bretton Woods

12 - Killington

13 - Whiteface

14 - Sunday River

15 - Stratton

16 - Holiday Valley

17 - Waterville

18 - Cannon

19 - Wildcat

20 - Wachusett

1 - Smugglers Notch

2 - Sugarbush

3 - Mount Snow

4 - Tremblant

5 - Jay Peak

6 - Mad River Glen

7 - Sugarloaf

8 - Stowe

9 - Okemo

10 - Loon

11 - Bretton Woods

12 - Killington

13 - Whiteface

14 - Sunday River

15 - Stratton

16 - Holiday Valley

17 - Waterville

18 - Cannon

19 - Wildcat

20 - Wachusett

No they are not, they are an equal weighting of the individual categories, and this has been a known fact for well over a decade on these threads. Thus my View attachment 30133 editorial lead-in.

With at least half the categories being non skiing related, the SKI Magazine rankings will always inspire a knee jerk reaction of derision among the avid ski community. When I was working on that project two years ago, I rounded up several old SKI Magazine surveys and used them as a guideline for some of the non ski related categories. However, I'm in philosophical agreement with Christ Steiner of Zrankings. If we can construct an objective measure for a category, we should try to do that.

The as yet undeveloped project had the right idea for overall rankings. Let the end user choose the importance weightings.

So, what are they doing with the Overall Satisfaction ratings? (Maybe it's published in the magazine in fine print?) And, you say they are giving all the categories equal weight, but they are asking how important each item is to you as well. What are they doing with that data? They've got two other methods for determining "best" overall -- the Overall questions themselves, and use of the survey respondents' weightings. Why ask for the data if they don't use it?

I asked once why SKI Magazine doesn't use the respondents' weightings to determine "best overall" and never got an answer. I have had a fair amount of interaction with print media and it's a VERY frustrating process. They do what they want to do, editing is done by people up the food chain from your contact person and key points are routinely omitted or misstated. Pointed questions like yours are nearly always ignored.So, what are they doing with the Overall Satisfaction ratings? (Maybe it's published in the magazine in fine print?) And, you say they are giving all the categories equal weight, but they are asking how important each item is to you as well. What are they doing with that data? They've got two other methods for determining "best" overall -- the Overall questions themselves, and use of the survey respondents' weightings. Why ask for the data if they don't use it?

For the ski area rating project two years ago I contacted SKI Magazine and asked for the complete results by category instead of the top 5 or 10 they show online or in print. I was told that they just collect the data and subcontract it out for processing, not always to the same company. I was willing to take the results of ANY recent season, whatever was most convenient on their end. Despite two phone conversations with the editor and several e-mails, I got nothing. As for the "equal weighting" method, that has been going on for at least 20 years so don't expect that to change, even though they have been asking the respondents about category importance most of that time. Category definitions have been tweaked from time to time but there have always been a relatively large number of non-skiing related categories.

The SKI Magazine survey is a subject where I've personally let go long ago. I participated in 4 of them and see no need to make that effort again. It is what is and it's not going to change. With both data collection and personal experience I can do a far better job of this myself, and I actually did most of that work two years ago.

Very happy to NOT see any of my regular stops on any of these lists at all.. Nothing to see there... head on up to Wachussett!

Very happy to NOT see any of my regular stops on any of these lists at all.. Nothing to see there... head on up to Wachussett!

There should be a campaign to see if we can get Mt. Wachusett into the top five for next year. PugSkiers unite!

Sponsor

Members online

- genxcop

- BMC

- David Edwards

- Arraksboll

- taylortrimble

- surfski

- scrubadub

- Cheizz

- BigSlick

- Turoa Kiwi

- Noodler

- Sugarbowler

- summit-teach

- DanoT

- Larry

- Henryboppy

- aarghh

- Peb75

- Spoorosew

- John Webb

- dovski

- In2h2o

- Victorguq

- dan ross

- SpeedyKevin

- RollingLeaf

- Stephen

- butleri

- Aeolian

- Powder and Corn

- AUdicky

- zircon

- skibumsworldwide

- geepers

- anders_nor

- tuomor

- Jwrags

- TrickySr.

Total: 2,422 (members: 43, guests: 1,876,robots: 503)